If you are planning to sustain to bring your website on the first page of the search engine, you need to understand the existing SEO condition of your website. To do that, you will need to perform SEO audit.

Although there are several ways you can promote your website and business online, SEO is one of the best options. In fact, SEO gives 5.66x more opportunities than paid search.

The consumption of search engines is fascinating. In the US alone, 40-60 billion searches happen each month. Furthermore, Google entertains 15% of unique search queries every day.

So, to get maximum results out of your SEO efforts and improve your search engine rankings, knowing existing SEO condition is the first step and a researched SEO audit report can help with that.

Here’s the Complete Guide for Beginners to Perform an SEO Audit

On-page and off-page SEO both go parallelly in determining your website’s ranking in the search engine result pages. There are various aspects through which you need to audit to decide if your website is search engine friendly.

Through your condition of on-page SEO, search engine bots understand the context of your website, then crawl, index and rank your website accordingly.

Before you start auditing your website, you will need to have SEO audit tools to streamline your procedure.

If you have any other SEO audit reports, take them as reference. You can compare a few data for verification.

On-Page SEO Analysis

Keyword Research

Keywords are the backbone of your website’s SEO. If you wish to generate high quality and quantity traffic you need to hunt highly searched and less competitive keywords in your niche.

You can also brainstorm questions that users have while searching for something related to your niche and include that in your keyword. As per a fact, 8% of search queries are phrased as questions.

To research the keywords for your website, you can look for the competitors’ keywords and see what their top performing webpages are and on which keywords they are ranking. Moreover, you can also go for Ubersuggest or Google Keyword Planner and initiate your research.

Google also helps you by autocomplete and showing “People also search for” below the SERP. You can know what people are also searching online and include relevant phrases as your LSI keywords.

Optimize Content and Meta Tags

Meta tags are the snippets that represent what a particular webpage has on it. These tags cannot be seen on the website but they are present in the source code. Content is the single most important ranking factor of your website ranking. Ensure that relevant keywords are mentioned at regular intervals.

Now, don’t stuff the keywords into the content, but aspire to mention the keywords naturally.

Bots read these tags to quickly understand the content a page hosts. So, after keyword research, optimize the meta tags by including your keywords.

● Title

After content, the title tag is the most on-page SEO element that helps in ranking of your website. Your titles should be appealing to the users and make them click to your results.

The title is the most prominent part of your SEO strategy as searches check your titles first before anything else on your webpage. The ideal length of a title is 50-60 characters.

● Header

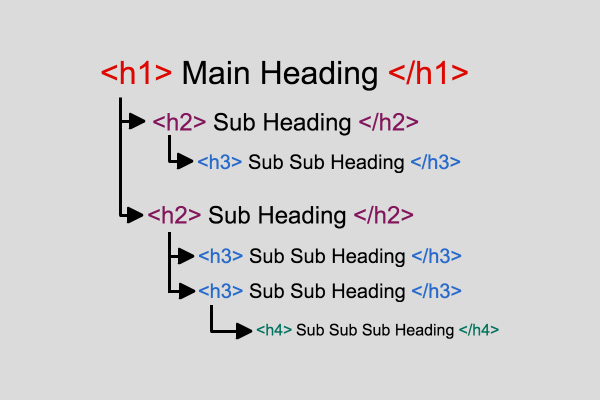

There is a total of six header tags in HTML. From <h1>,<h2>,….,<h6>. They help you divide your content by introducing sub-points to it.

You can include LSI keywords in your header tags when introducing a sub-topic in your webpage.

● Description

A meta description is the summary of the content of your webpage. It shows the crux of your pages and search engines show the meta description in the SERP to let the user know more about a page.

The search engines also highlight the search query if it matches a phrase mentioned in the description, so consider adding the keywords in the description. The ideal length of a meta description is 160 characters.

● URL

A search engine friendly URL should be intuitive to not only users but the bots. You must optimize the URLs of all the pages from a random string of characters to keyword-rich relevant URLs.

Loading Speed

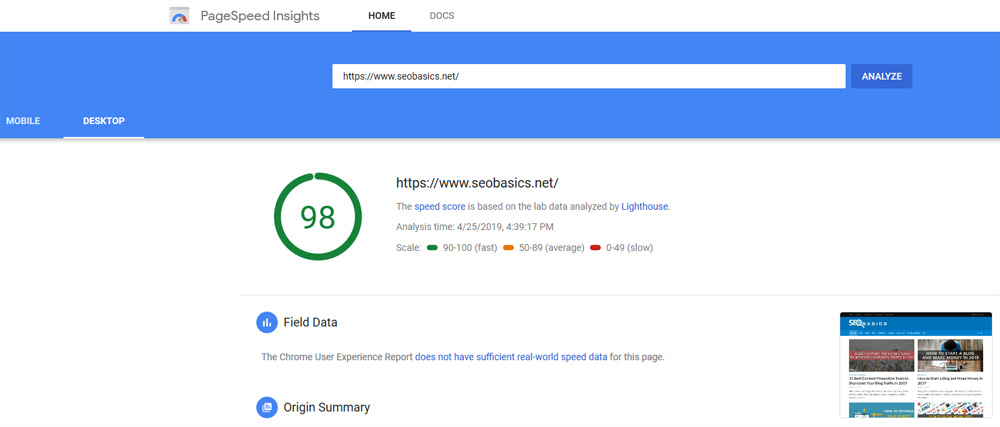

Google has confirmed that website loading speed is the ranking factor. The website which takes time to load eventually gives bad user experience to the visitors as they are forced to wait to read the information written on the website. Although there is no specific number for the seconds at which your website loads, John Muller has tweeted that one must aim to get a loading speed around 2-3 seconds.

To optimize your page loading speed, first, you need to check the loading speed and check the source code file to know which code snippets are blocking the website to load instantly. Delete those unwanted blocks of code, and you will observe the improved loading speed.

PageSpeed Insights and GTMetrix both are great tools to determine the loading speed. Personally, I use PageSpeed Insights for the matter. You have to give the URL of your website to get the score. PageSpeed Insights provides a detailed report from the CSS file to images that you must optimize on your website.

SSL

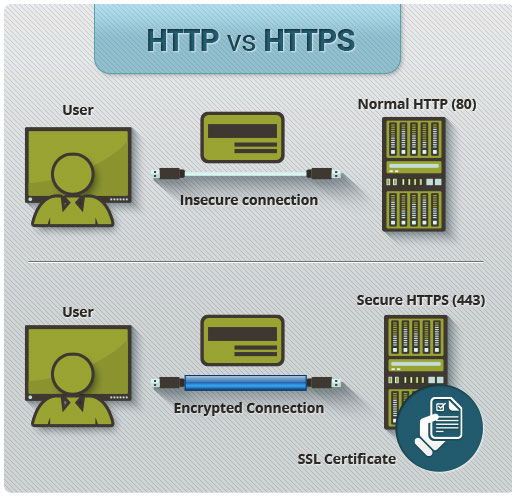

SSL gives you secure file transfer protocol and ensures a secured connection between the visitor’s web browser and the website’s web server by encrypting the connection at both the ends. According to Moz, over half of the results on the first page are HTTPS.

It gives your users an uninterrupted connection while they access the website. Google has confirmed the HTTPS as a ranking signal. To enable HTTPS, you will need the SSL certificate installed in your web server. Ask your hosting service provider such as SiteGround and Bluehost or if you don’t have SSL, you can go for free SSL service providers like Cloudflare, SSL For Free, Let’s Encrypt and get the SSL certificate as soon as possible.

Mobile Friendliness

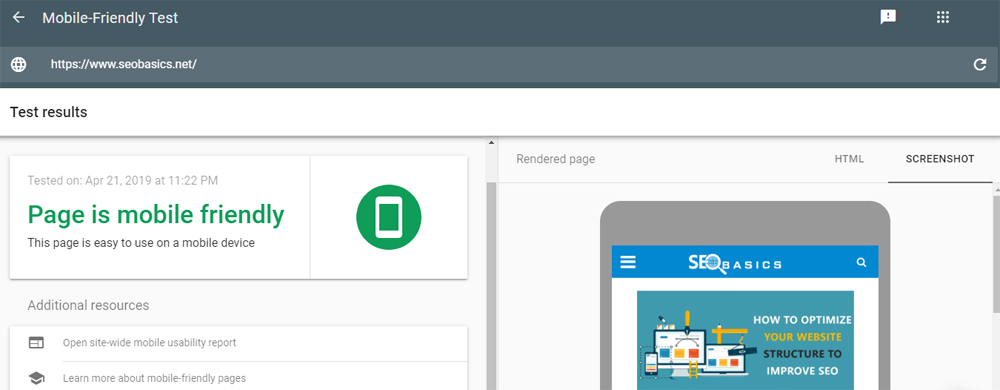

Google announced last year that it supports mobile first indexing. Meaning, if your website is compatible with smartphones’ screens, your website gets indexed and ranked quickly.

You need to check if your website is mobile friendly or not. You can use tools Mobile-Friendly Test for the instant check.

Usually, if you are using WordPress as your content management systems, your theme would most probably be compatible with smartphone devices.

Optimize the Images

This is one of the most overlooked technique to help your website’s SEO value. Users are able to understand what the image represents, but search engine bots do not have enhanced understanding to read the context of the image.

The bots check the ALT tags of the image to comprehend what the image represents. To know which images do not have ALT tags, you can use a tool by Internet Marketing Ninjas. Just put the URL of your website and the tool will give you the images that miss the ALT tags.

Verify that images on your website should define ALT value to tell the search engine bots what the image represents. You can check here to know how to write ALT tags.

For your images, you can include your keywords or add LSI keyword phrases to mention the relevance of the image with the content. For eCommerce, ALT tags can help improve search engine ranking.

Accessibility and XML Sitemap

Make sure that all the relevant pages are accessible to your website to the users and bots. Otherwise, what is the benefit of creating relevant and excellent content if the website couldn’t be accessed from anyone?

The ideal practice is to format an XML sitemap which tells the search engines about the pages on the website.

You can use SEO Site Checkup to know if your website has a sitemap. If you want to create the sitemap from scratch, you can use Small SEO Tools’ sitemap generator.

Check for Google Penalties

Check that the website is not penalized by search engines. Well, unless you get a manual penalty, you won’t get any notification from the search engine that your website is penalized.

If your traffic in Google Analytics has a sudden drop and you don’t know the reason. Check the following things.

Audit your content before anything else. Check you have plagiarism-free content and delete those pages which have plagiarised content. You can use Free Plagiarism Checker tool for the same.

Delete low-quality backlinks before Google penalizes you. How to check the backlinks is mentioned in the article below. But when you find toxic links, delete them immediately or disavow them from Google Search Console, so Google won’t consider them.

Also, check for the doorway or gateway pages in the websites that lead to an unintended website. Moreover, don’t stuff your sidebars and headers with banner and affiliate links and never use any black hat technique for your website.

Robots.txt

Robots.txt is a file that mentions the resources which should not be indexed by the crawlers and not be seen on the SERP. If you don’t add Robots.txt, you will be lost the control from your indexing and crawling of the webpages. You will observe that the bots crawl everything that is hosted on your website.

Broken Links

Broken links are the dead pages which give 404 errors, i.e., page not found. Ensure that there are none broken links on your website because if Google bots find broken links, your website ranking will take a hit.

Whenever the visitors click on the broken link, they are redirected to the 404 pages and ending up getting bad user experience from your website. Moreover, the bounce rate will also increase as users might leave your website due to dead links.

301 Redirection

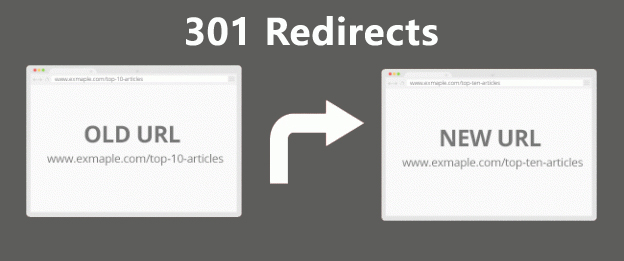

301 redirection is important. If your webpages are permanently shifted to a new web resource, you can mention it through 301 redirections. Otherwise, Google bots won’t know that the location of a particular resource is shifted to a new place.

For example, you have a webpage www.example.com/webpage1 which is already ranking for a group of specific keywords.

Now, for whatever reasons, you happened to be taken down this page and moved the same content to a new URL named www.example.com/webpage2. How would search engines know that the content of webpage1 is moved to webpage2? For them, webpage2 is a unique resource altogether, and that page has to work hard to get the ranking.

If the webpage1 is ranking in SERP and whenever use clicks it, they should be redirected to webpage2. Otherwise, they will get 404 error, and your hard work of getting the ranking for webpage1 would give you zero value. To redirected users and the link juice, 301 ranking is must be used.

Moreover if you have installed HTTPS on your website, you will have four possible URL options to access your websites.

- https://www.yourwebsite.com

- https://yourwebsite.com

- http://www.yourwebsite.com

- http://yourwebsite.com

It won’t make a difference if your website has www in it. However, ideally, the URL must be redirected to the https version whenever the user types your website URL.

Internal Backlinks

Align the structure of your webpage to make primary webpages discoverable throughout the website. Make your website navigation robust enough to give the users a chance to switch to any relevant pages whenever they want. To do that, you can maintain a menu in the header of your website or place the navigation in the site-wide footer.

Cite your other webpages inside the content of relevant webpages. Whenever a search engine bots come to a webpage, they scan the content of the webpage and go to the webpages which are cited there, and so on.

Through internal linking, you can make your webpages instantly accessible to crawlers. Again, the webpages have to be relevant so that you can give excellent user experience whenever they read your content.

Off-Page SEO Analysis

Check Backlinks

All the links that point back to your website are considered as backlinks of your website. Indeed, quality backlinks can give you can generate a flood of relevant traffic and also improve your website’s SEO value because search engines consider a website appropriate for a niche if more websites are pointing to the website.

To check your backlinks, you can use popular SEMrush backlink audit tool. Other tools are Moz’s backlink checker and Google search console.

There are two types of backlinks:

-

NoFollow: NoFollow is an attribute given to a backlink to tell search engines bots not to follow the link and don’t consider any link juice from that particular backlink.

-

DoFollow: Conversely to NoFollow links, DoFollow links instructs search engine bots to follow them and take the link juice from the hosting website for the SEO purposes.

Ideally, it would help if you aspired to get maximum relevant DoFollow links to improve your search engine rankings. However, there might be several links that need to be removed because they won’t be adding any value to your website’s SEO. To find those websites, you need to perform a link audit for the same.

You can use the SEMrush backlink audit tool that would give you backlinks report of a particular website. You can export the data to analyze the backlinks for your or your competitor’s website.

Ensure that the NAP is Consistent

NAP stands for Name, Address, and Phone. While doing off-page SEO, you would provide these data of your business to the website like social media, citation, and profile link building websites when you are registering an account.

These details are usually public and can be seen by anyone on the internet. Moreover, whenever Google bots rank a website which represents a local business, it considers the name, address and phone numbers provided to the web.

If you have consistently given these details to all the major platforms, your local ranking will get positive results. Irrelevant and inconsistent NAP values will harm your local SEO efforts and make your website and business unreliable.

Diversify Your Anchor Text

Anchor texts are the clickable text in your content that redirects to a web resource. If the anchor texts are monotonous for most of your backlinks, it will create a negative impression, and the search engine would consider that you have spammed backlinks with same anchor texts across the web.

The ideal practice is to diversify your anchor texts in a way that your backlinks look natural. Along with your brand names, you can check to execute backlinks with various anchor texts across the platform.

Conclusion

Do let us know what you keep in mind while doing an SEO audit for a website. Everyone has a different perspective of approaching SEO audit process, but few things must be ensured to have a successful SEO campaign.

Let us know your views in the comments.